Welcome to the ultimate guide on becoming a Kubernetes deployment virtuoso.

Use Case and Creating a Deployment

When it comes to Kubernetes, understanding the use case and creating a deployment are crucial steps in mastering deployment strategies. A use case is simply a real-world scenario where Kubernetes can be applied to solve a problem or achieve a goal. For example, a use case could involve deploying a web application that requires high availability and scalability.

To create a deployment in Kubernetes, you need to define a manifest file written in YAML. This manifest file includes metadata about the deployment, such as the name and labels. It also specifies the desired number of replicas, which determines how many instances of the application will be running.

Once the manifest file is created, you can use the Kubernetes CLI or API to apply it and create the deployment. Kubernetes then takes care of scheduling the necessary pods and managing the lifecycle of the application.

One important aspect to consider when creating a deployment is reliability. Kubernetes allows for autoscaling, which means that additional pods can be automatically created or terminated based on the workload. This ensures that the application can handle increased traffic without downtime.

Load balancing is another key factor in deployment strategies. Kubernetes provides built-in load balancing using its own load balancer or through integration with external load balancers like Nginx. This ensures that traffic is evenly distributed among the pods, improving the overall performance and customer experience.

Additionally, namespaces in Kubernetes allow for the segmentation of resources and provide a way to organize and isolate deployments, making it easier to manage and scale complex applications.

Pod-template-hash label and Label selector updates

| Label | Description |

|---|---|

| Pod-template-hash | A label automatically added to every Pod created by a Deployment or ReplicaSet. It is based on the hash of the Pod template, which includes the container spec, volumes, and other Pod settings. This label is used for managing rolling updates and ensuring the desired state of deployed Pods. |

| Label selector | A mechanism used to select Pods based on their labels. It allows defining a set of labels and their values to filter Pods. Label selectors are used by Deployments and ReplicaSets to manage and update Pods based on the desired state defined in their configuration. |

Updating a Deployment and Rollover (aka multiple updates in-flight)

To begin, it is important to understand the concept of a Deployment in Kubernetes. A Deployment is a higher-level abstraction that manages the deployment of your application software. It ensures that the desired number of replicas are running at all times, and it can handle rolling updates to your application.

When updating a Deployment, Kubernetes allows for multiple updates to be in-flight simultaneously. This means that you can have multiple versions of your application running at the same time, allowing for a smooth transition from one version to another.

To achieve this, Kubernetes uses a strategy called rolling updates. This strategy works by gradually replacing instances of the old version with instances of the new version. It does this by creating a new ReplicaSet with the updated version, and then slowly scaling down the old ReplicaSet while scaling up the new one.

During this process, Kubernetes ensures that the desired number of replicas are always running, minimizing any potential downtime. This is achieved through the use of load balancing and autoscaling techniques.

Kubernetes also provides the ability to define a rollout strategy using YAML or other configuration files. This allows you to specify parameters such as the number of replicas, the update strategy, and any additional metadata that may be required.

By mastering the art of updating a Deployment and performing rollouts effectively, you can ensure that your application remains reliable and continuously improves over time. This is essential in today’s DevOps environment, where quick and efficient updates are necessary to keep up with the ever-changing product lifecycle.

Rolling Back a Deployment and Checking Rollout History of a Deployment

Rolling back a deployment is a crucial task in managing Kubernetes deployments. In case a new deployment causes issues or introduces bugs, it’s important to be able to quickly roll back to a previous stable version.

To roll back a deployment, you need to use the Kubernetes command line tool, kubectl. First, you can use the “kubectl rollout history” command to view the rollout history of your deployment. This will show you a list of all the revisions of your deployment, along with their status and any annotations.

Once you have identified the revision you want to roll back to, you can use the “kubectl rollout undo” command followed by the deployment name and the revision number. This will initiate the rollback process and revert the deployment to the specified revision.

It’s worth noting that rolling back a deployment may not always be a straightforward process, especially if the rollback involves changes to the underlying infrastructure or dependencies. Therefore, it’s important to have a well-defined rollback strategy in place and regularly test it to ensure its effectiveness.

By mastering Kubernetes deployment strategies, you can confidently handle deployment rollbacks and ensure the reliability of your applications. This is especially important in the context of DevOps and the product lifecycle, where the ability to quickly respond to issues and provide a seamless customer experience is crucial.

To enhance your Kubernetes deployment strategies, consider incorporating practices such as load balancing, using tools like Nginx or Docker, and leveraging distributed version control for efficient collaboration. Additionally, organizing your deployments using namespaces can help manage and isolate different application software or environments.

Rolling Back to a Previous Revision and Scaling a Deployment

To roll back to a previous revision, you can use the Kubernetes command-line tool or the Kubernetes API. By specifying the desired revision, Kubernetes will automatically revert to that version, undoing any changes made in subsequent revisions. This feature is especially useful when deploying updates or bug fixes, as it provides a safety net in case something goes wrong.

Scaling a deployment is another important aspect of Kubernetes. As your application grows and user demand increases, you need to be able to handle the additional load. Kubernetes allows you to scale your deployments horizontally by adding more instances of your application. This ensures optimal performance and efficient resource utilization.

To scale a deployment, you can use the Kubernetes command-line tool or the Kubernetes API. By specifying the number of replicas you want to create, Kubernetes will automatically distribute the workload across the available instances. This enables load balancing and ensures that your application can handle increased traffic and requests.

By mastering the strategies of rolling back to a previous revision and scaling deployments, you can effectively manage your applications in a Kubernetes environment. These techniques provide flexibility, reliability, and scalability, allowing you to deliver high-quality services to your users.

Remember, Kubernetes is a powerful tool that can greatly enhance your workflow and application management. It is important to gain expertise in Kubernetes to fully leverage its capabilities. Consider enrolling in Linux training courses that cover Kubernetes and its deployment strategies. With proper training, you can become proficient in deploying and managing applications using Kubernetes, ensuring the success of your projects.

So, if you want to take control of your deployments and ensure smooth operations, mastering Kubernetes is the way to go. Don’t miss out on the opportunity to enhance your skills and take your career to new heights. Start your journey towards mastering Kubernetes today!

Proportional scaling and Pausing and Resuming a rollout of a Deployment

Proportional scaling is a key feature of Kubernetes that allows you to dynamically adjust the resources allocated to your deployment based on the current demand. This ensures that your application can handle fluctuations in traffic without being overwhelmed or underutilized. With proportional scaling, you can easily increase or decrease the number of replicas in your deployment to match the workload.

To scale your deployment, you can use the Kubernetes command line interface (CLI) or the Kubernetes API. By specifying the desired number of replicas, Kubernetes will automatically adjust the number of pods running your application. This automated process allows you to efficiently allocate resources and optimize the performance of your deployment.

Another important aspect of Kubernetes deployment strategies is the ability to pause and resume a rollout. This feature allows you to temporarily halt the deployment process, giving you the opportunity to assess any issues or make necessary changes before continuing. Pausing a rollout ensures that any updates or changes won’t disrupt the stability of your application.

To pause a rollout, you can use the Kubernetes CLI or API to set the desired state of your deployment to “paused”. This will prevent any further changes from being applied until you resume the rollout. Once you’re ready to proceed, you can simply resume the rollout, and Kubernetes will continue applying any pending changes or updates.

By mastering Kubernetes deployment strategies like proportional scaling and pausing and resuming rollouts, you can ensure the reliability and efficiency of your applications. These techniques allow you to easily scale your deployment to meet demand and make necessary adjustments without interrupting the user experience.

Additionally, Kubernetes provides other features like distributed version control, load balancing, and best-effort delivery that further enhance the performance and reliability of your deployment. With its powerful array of tools and features, Kubernetes is the ideal platform for managing and orchestrating your containerized applications.

So, if you’re looking to optimize your deployment workflow and take advantage of the benefits that Kubernetes offers, consider taking Linux training. Linux training will provide you with the knowledge and skills you need to effectively utilize Kubernetes and Docker, Inc.’s containerization technology. With this training, you’ll be able to confidently navigate Kubernetes namespaces, leverage Docker software, and deploy your applications with ease.

Don’t miss out on the opportunity to master Kubernetes deployment strategies and elevate your application development. Start your Linux training journey today and unlock the full potential of containerization and orchestration.

Complete Deployment and Failed Deployment

When it comes to deploying applications using Kubernetes, there are two possible outcomes: a successful deployment or a failed deployment. Understanding both scenarios is crucial for mastering Kubernetes deployment strategies.

In a complete deployment, your application is successfully deployed and running on the Kubernetes cluster. This means that all the necessary resources, such as pods, services, and volumes, have been created and are functioning as expected. A complete deployment ensures that your application is accessible to users and can handle the expected load.

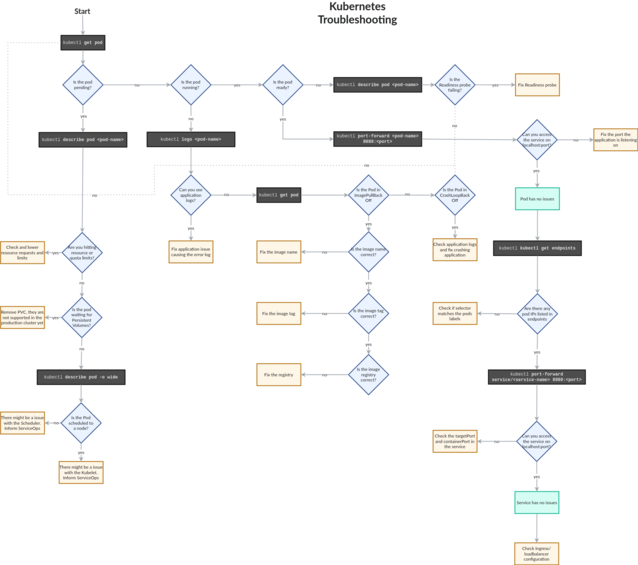

However, there are times when deployments can fail. This can happen due to various reasons such as configuration errors, resource constraints, or networking issues. When a deployment fails, it means that the application is not running as intended or not running at all.

To troubleshoot a failed deployment, you need to investigate the error messages and logs provided by Kubernetes. These logs can give you insights into what went wrong during the deployment process. By analyzing the logs, you can identify the root cause of the failure and take appropriate actions to fix it.

One common strategy to improve the reliability of deployments is to use a rolling update strategy. This strategy allows you to update your application without causing downtime. By gradually updating the application, you can minimize the impact on users and ensure a smooth transition.

Another important aspect of successful deployments is load balancing. Kubernetes provides built-in load balancing capabilities that distribute traffic evenly across multiple pods. This ensures that your application can handle high traffic volumes and provides a seamless user experience.

In addition to load balancing, namespaces are used to create isolated environments within a Kubernetes cluster. This allows different teams or applications to have their own dedicated resources and prevents interference between them.

To make the most out of Kubernetes deployments, it is recommended to have a solid understanding of Docker. Docker is an open-source platform that enables you to package and distribute applications as containers. By using Docker alongside Kubernetes, you can easily deploy and manage applications in a scalable and efficient manner.

Operating on a failed deployment and Clean up Policy

To begin with, it is essential to understand the common reasons for deployment failures. These can include issues with resource allocation, conflicts between different containers, or errors in the configuration files. By analyzing the logs and error messages, you can pinpoint the root cause and take appropriate action.

One effective strategy for operating on a failed deployment is to roll back to the previous working version. Kubernetes allows you to easily switch between different versions of your application, providing a fallback option in case of failures. This can be achieved by using the rollback feature or by leveraging version control systems.

Another important aspect of managing failed deployments is implementing a clean-up policy. This involves removing any resources that were created during the failed deployment, such as pods, services, or namespaces. Failure to clean up these resources can lead to resource wastage and potential conflicts with future deployments.

To ensure efficient clean-up, you can automate the process using Kubernetes tools and scripts. This not only saves time but also reduces the chances of human error. Additionally, regularly monitoring and auditing your deployments can help identify any lingering resources that need to be cleaned up.

What is a Kubernetes Deployment Strategy?

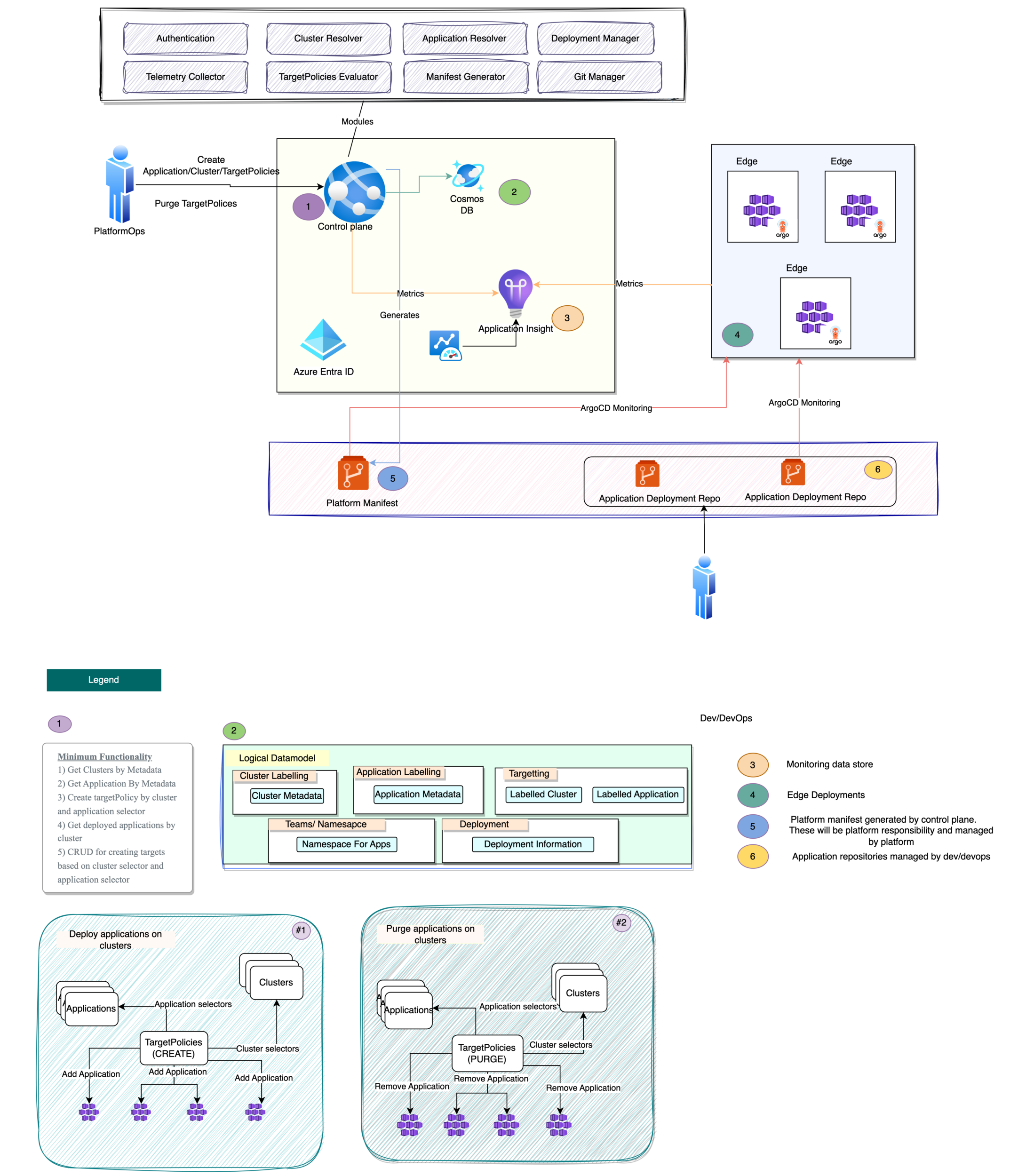

A Kubernetes deployment strategy refers to the process of managing and scaling applications in a Kubernetes cluster. It involves defining how the application should be deployed, updated, and scaled to meet the desired workload.

One popular deployment strategy is rolling updates, which allows for seamless updates without downtime. This strategy involves gradually updating the application by replacing old instances with new ones. It ensures that the application is always available to users, as the old instances are only terminated after the new ones are up and running.

Another strategy is blue-green deployment, which involves running two identical environments, one “blue” and one “green.” The blue environment represents the current production environment, while the green environment is used for testing updates or new features. Once the green environment is deemed stable, traffic is redirected from blue to green, making it the new production environment.

Canary deployments are another strategy that involve gradually rolling out updates to a subset of users or servers. This allows for testing of new features or updates in a controlled environment before deploying to the entire user base.

In addition to these strategies, Kubernetes also provides features like auto-scaling, which automatically adjusts the number of instances based on the workload. This ensures that the application can handle fluctuations in traffic and maintain optimal performance.

By mastering Kubernetes deployment strategies, you can ensure that your applications are deployed and managed efficiently, with minimal downtime and maximum scalability. This can greatly enhance the reliability and performance of your applications, enabling you to meet the demands of your users effectively.

Whether you are deploying a small application or managing a large-scale production environment, understanding Kubernetes deployment strategies is essential. With the rapid growth of cloud-native technologies, such as Docker and Kubernetes, having the skills to deploy and manage applications in a scalable and efficient manner is highly valuable.

Linux training can provide you with the knowledge and skills needed to master Kubernetes deployment strategies. By learning about the different deployment strategies and how to implement them effectively, you can become a skilled Kubernetes administrator and ensure the success of your applications. So, take the first step towards mastering Kubernetes deployment strategies by enrolling in Linux training today.