In the fast-paced world of cloud computing, having the right monitoring tools is essential to ensure optimal performance and reliability.

Automated Monitoring Deployment

By incorporating automation into your monitoring strategy, you can streamline the deployment process and ensure consistent performance across your applications. This not only saves time but also enhances the overall efficiency of your operations.

With Cloud Foundry’s advanced capabilities, you can easily integrate monitoring tools into your workflow, making it simpler to manage and optimize your system. By leveraging automation and monitoring tools, you can proactively address potential issues before they impact your users.

Take advantage of Cloud Foundry’s robust monitoring ecosystem to enhance the reliability and security of your applications. By deploying automated monitoring solutions, you can stay ahead of potential issues and maintain peak performance at all times.

DevOps Support

One popular tool for Cloud Foundry monitoring is Dynatrace, which provides real-time insights into your applications and infrastructure. It can help you identify performance issues and bottlenecks, allowing you to optimize your systems for maximum efficiency.

Another important aspect of monitoring is Serverless computing, which allows you to scale your applications dynamically based on demand. Tools like Redis can help you monitor your serverless applications and ensure they are running efficiently.

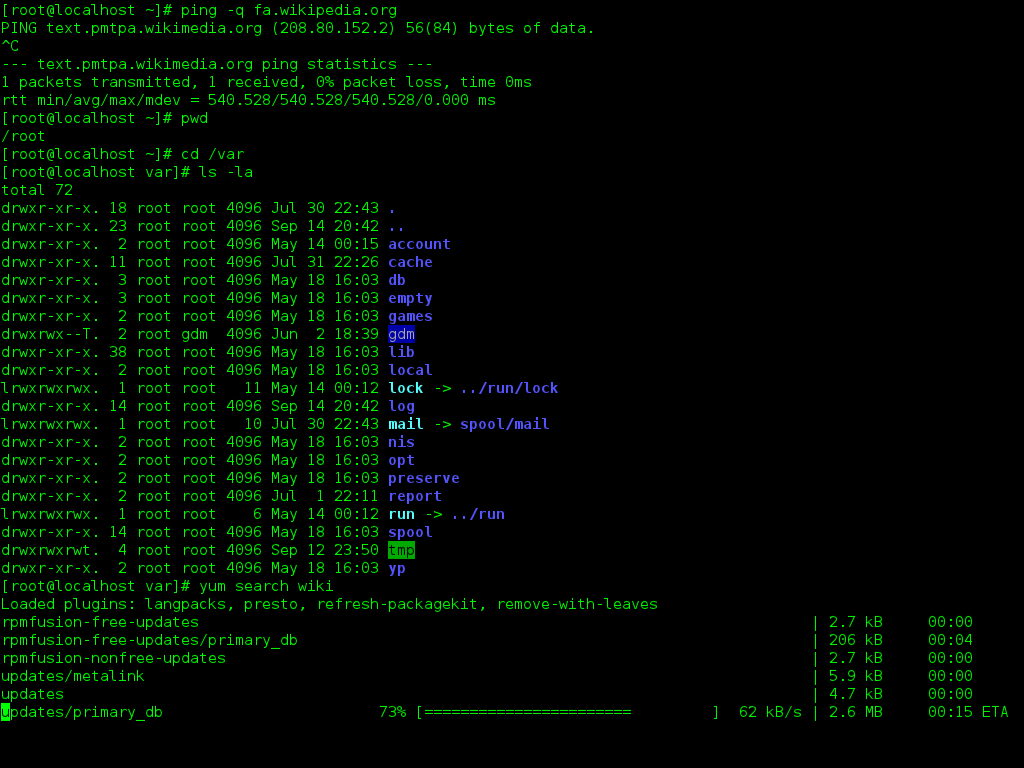

In addition to monitoring tools, it’s also important to have a solid understanding of Linux and the command-line interface. Taking Linux training can help you navigate your systems more effectively and troubleshoot any issues that may arise.

Core Technologies

When it comes to monitoring Cloud Foundry, there are several core technologies that play a crucial role in ensuring optimal performance. One of the key technologies is BOSH, which is a deployment and lifecycle management tool that helps with scaling and maintaining Cloud Foundry environments. DevOps practices are also essential, as they help automate processes and streamline operations for better efficiency.

Another important technology to consider is serverless computing, which allows for running applications without the need to manage servers. **Dynatrace** is a popular monitoring tool that provides insights into application performance and user experience, making it a valuable asset for monitoring Cloud Foundry environments.

**Analytics** tools can also be integrated to track and analyze data generated by Cloud Foundry applications, providing valuable insights for optimization.

Metrics Access

With **Metrics Access**, you can easily monitor key performance indicators, such as response times, throughput, error rates, and more. This data allows you to make informed decisions about optimizing your applications and infrastructure.

By utilizing tools like BOSH, you can collect metrics from various components within your Cloud Foundry environment, providing a comprehensive view of your system. Syslog integration and command-line interfaces further enhance your monitoring capabilities, enabling you to troubleshoot issues efficiently.

Access to metrics is crucial for ensuring the success of your applications in the cloud. By utilizing Cloud Foundry monitoring tools, you can proactively identify and address potential issues, ultimately improving the overall performance and reliability of your applications.

Log and Metric Sources

When it comes to **Cloud Foundry monitoring tools**, the key lies in the ability to effectively log and **metric sources**. Logs provide valuable insights into the performance of your applications, while metrics offer a quantitative measurement of key performance indicators.

By utilizing tools that can aggregate and analyze log data from various sources, such as BOSH and Syslog, you can gain a comprehensive view of your system’s health and performance. Additionally, leveraging metric sources like the Command-line interface and APIs can provide real-time visibility into resource utilization and application behavior.

Monitoring tools that support **data science** and analytics can help you identify trends, anomalies, and potential issues before they impact your applications. This proactive approach can improve overall system reliability and performance.

In the era of **cloud computing** and **microservices**, having robust monitoring tools in place is essential for ensuring the smooth operation of your applications. With the right tools, you can harness the power of artificial intelligence and parallel computing to streamline operations and optimize performance.

Don’t overlook the importance of security when selecting monitoring tools. Look for features that provide real-time insights into potential security threats and vulnerabilities, helping you to bolster your system’s defenses and protect sensitive data.

Configuration Prerequisites

Next, familiarize yourself with the various data science and artificial intelligence concepts that may be utilized within the monitoring tools. Understanding these technologies will help in interpreting and analyzing the monitoring data effectively.

Additionally, ensure that proper security measures are in place, such as setting up secure login credentials and implementing encryption for sensitive data. This will help in safeguarding the monitoring tools and the data they collect.

By ensuring that these configuration prerequisites are met, users can effectively leverage Cloud Foundry monitoring tools to monitor the performance and health of their applications in real-time.

Data Retention Policies

When setting data retention policies, consider factors such as the type of data being stored, its sensitivity, and the potential risks associated with its retention. Data that is no longer needed should be securely deleted to minimize the risk of data breaches. Regularly auditing data retention practices can help identify areas for improvement and ensure compliance with industry standards.

By establishing clear data retention policies and utilizing monitoring tools to enforce them, organizations can better protect sensitive information and mitigate the risk of data breaches. This proactive approach to data management is essential in today’s digital landscape where data privacy and security are paramount concerns.

Alerting Configuration

By configuring alerts, you can receive real-time notifications via email, SMS, or other channels when specific conditions are met. This proactive approach allows you to address potential problems before they escalate, minimizing downtime and maximizing the reliability of your applications.

Make sure to define clear alerting rules based on key performance indicators and thresholds that are relevant to your specific use case. Regularly review and update these configurations to ensure they remain effective in detecting and addressing any issues in your Cloud Foundry environment.