Are you interested in pursuing a career in software engineering but don’t know where to start? This article will guide beginners on the path to becoming a successful software engineer.

Planning Your Career Path in Software Engineering

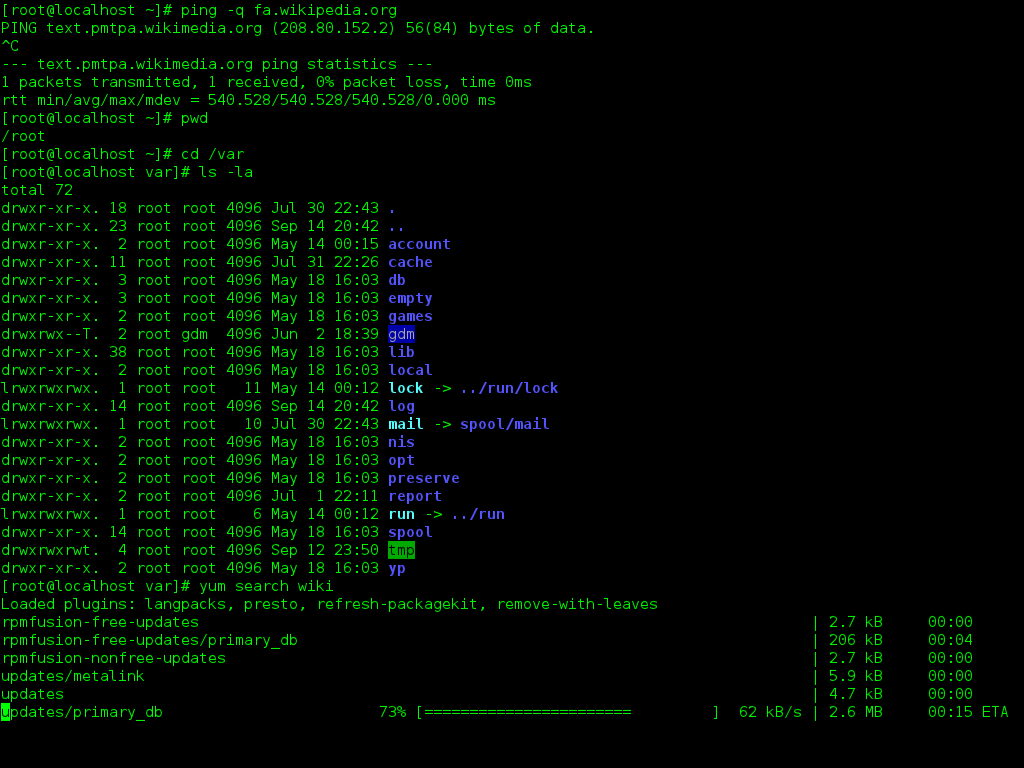

When planning your career path in **software engineering**, it is important to start with a strong foundation of knowledge and skills. One of the best ways to begin is by taking **Linux training**, as Linux is a widely used operating system in the software engineering field.

Learning Linux will not only give you a solid understanding of operating systems, but it will also help you develop important skills in **scripting** and **programming**. These skills are essential for becoming a successful software engineer and will be valuable throughout your career.

In addition to Linux training, it is important to also focus on developing your skills in **computer programming** and **problem solving**. These skills are at the core of software engineering and will help you excel in this field.

As you progress in your career, consider specializing in areas such as **mobile app development** or **web development** to further enhance your skills and knowledge. By focusing on a specific area, you can become an expert in that field and open up more opportunities for advancement.

Remember to continually seek out learning opportunities and stay up-to-date on the latest technologies and trends in the industry. This will help you stay competitive and make you a valuable asset to any company.

Gaining Experience and Building a Portfolio

By completing Linux training, you can learn how to use the operating system effectively, which is crucial for software development. This will help you become more familiar with scripting languages like JavaScript and Python, as well as high-level programming languages such as Java.

Additionally, Linux training can teach you important concepts in computer science, such as algorithms and data structures. These are fundamental topics that every software engineer should understand in order to solve complex problems and create efficient programs.

As you gain experience through Linux training, you can start working on projects to build your portfolio. This could include developing mobile apps, creating websites, or contributing to open-source software on platforms like GitHub.

Building a strong portfolio is key to showcasing your skills to potential employers and landing your first job as a software engineer. It demonstrates your ability to work on real-world projects and your commitment to continuous learning and improvement in the field.

Getting Certified to Advance Your Career

To advance your career as a software engineer, it is crucial to obtain the necessary certifications. One of the most valuable certifications for aspiring software engineers is Linux training. This certification demonstrates your proficiency in operating systems and can open up numerous opportunities in the industry.

Linux training will not only enhance your technical skills but also showcase your dedication to continuous learning and improvement. This certification is highly regarded by employers in the tech industry, making you a desirable candidate for various job positions.

By becoming certified in Linux, you will have a competitive edge in the job market and be better equipped to tackle complex technical challenges. This certification serves as a validation of your expertise in the field, giving you the confidence to take on new projects and responsibilities.

With Linux training, you can specialize in areas such as mobile app development, web development, data science, and more. This certification will give you the necessary skills to excel in these specialized fields and stand out as a top candidate for recruitment opportunities.

Applying For Software Engineering Jobs Successfully

When applying for software engineering jobs, it’s important to showcase your skills and experience effectively. Make sure your resume and cover letter highlight your relevant technical skills, projects, and education. Tailor each application to the specific job requirements to stand out to recruiters.

Consider building a strong online presence by showcasing your work on platforms like GitHub. Recruiters often look at your code contributions and projects to assess your skills. Participating in open-source projects or creating your own projects can demonstrate your abilities to potential employers.

Prepare for technical interviews by practicing coding problems on websites like LeetCode. Familiarize yourself with common algorithms and data structures, and be ready to explain your thought process during the interview. Communication skills are also important, so practice explaining your solutions clearly and concisely.

Stay updated on the latest technologies and trends in the industry. Continuous learning is key to staying competitive in the field of software engineering. Consider taking online courses or attending workshops to expand your knowledge and skills.

Networking can also be a valuable tool in your job search. Attend tech events, meetups, and conferences to connect with industry professionals. Building relationships with others in the field can lead to job opportunities and valuable insights.

Finally, be persistent and patient in your job search. Landing a software engineering job can take time, but with dedication and hard work, you can achieve your goal. Keep honing your skills, seeking feedback, and applying to relevant positions to increase your chances of success.

What Programming Languages to Focus on as a Beginner

When starting your journey as a beginner in software engineering, it’s crucial to focus on learning a few key programming languages that will set a strong foundation for your career.

Python is an excellent choice for beginners due to its readability and versatility. It is used in a wide range of applications, from web development to data science.

JavaScript is another essential language to learn, especially for frontend development. It is the backbone of interactive web pages and can enhance user experience.

Both Python and JavaScript are high-level programming languages that are relatively easy to learn and widely used in the industry.

As you progress in your learning, you may want to explore other languages such as Java, which is used in backend development, or C++ for system programming. However, starting with Python and JavaScript will give you a solid foundation to build upon.

Remember, the key to becoming a successful software engineer is not just mastering programming languages but also developing problem-solving skills and understanding algorithms.

By focusing on mastering a few key languages and continuously challenging yourself with new projects, you’ll be well on your way to becoming a proficient software engineer.

Don’t get overwhelmed by the vast array of programming languages out there. Start with Python and JavaScript, and gradually expand your knowledge as you gain more experience and confidence in your abilities.

By focusing on the basics and continuously honing your skills, you’ll be well-prepared to tackle more complex projects and advance in your career as a software engineer.

Steps to Become a Software Engineer Without a Degree

To become a software engineer without a degree, follow these steps:

1. Start by learning the fundamentals of computer programming. Understand different programming languages, problem-solving techniques, and how to write efficient code.

2. Gain hands-on experience by working on personal projects or contributing to open-source software. This will help you build a strong portfolio to showcase your skills to potential employers.

3. Take online courses or attend workshops to deepen your knowledge of programming languages such as Java or Python. Focus on both frontend and backend development to become a well-rounded software engineer.

4. Practice coding regularly and participate in coding challenges like LeetCode to improve your problem-solving skills. This will also prepare you for coding interviews during the recruitment process.

5. Stay updated on the latest trends and technologies in the software development industry. This will help you adapt to changes and remain competitive in the job market.

6. Network with other software engineers and professionals in the industry to learn from their experiences and gain insights into different career paths within software engineering.

7. Consider pursuing certifications or completing online courses in specific areas of software engineering, such as data structures or web development. This will help you specialize in a particular field and stand out to potential employers.

8. Be proactive in your learning and seek out opportunities to further develop your skills. Take on challenging projects that push you out of your comfort zone and help you grow as a software engineer.

Personal Journey and Timeline to Becoming a Software Engineer

To become a software engineer, one must embark on a personal journey that involves a clear timeline and dedication to learning. This journey typically starts with acquiring a solid foundation in computer science and programming languages.

Education plays a crucial role in this process, whether it’s through formal schooling or self-learning. Many successful software engineers have pursued a Bachelor’s degree in computer science or a related field to gain the necessary knowledge and skills.

However, becoming a software engineer is not just about academic credentials. It’s also about problem solving, creativity, and the ability to think critically. These skills can be honed through practice, whether it’s through coding challenges, personal projects, or even contributing to open-source projects.

One key aspect of becoming a software engineer is gaining proficiency in programming languages such as Java and Python. These languages are widely used in the industry and having a strong grasp of them can open up many opportunities for aspiring software engineers.

It’s also important to understand the difference between frontend and backend development, as well as the concepts of algorithms, data structures, and API design. These are all essential components of software engineering that one must master to succeed in the field.

In addition to technical skills, communication and collaboration are also crucial for software engineers. Being able to effectively communicate with team members, stakeholders, and clients is essential for delivering successful projects.

Advice on Changing Careers to Pursue Software Engineering

– Consider enrolling in a **Linux training** course to gain a solid foundation in operating systems and command line operations.

– Familiarize yourself with **high-level programming languages** like **Java** and **Python** to start building your coding skills.

– Practice writing code and solving problems to improve your **algorithmic thinking** and **problem-solving abilities**.

– Work on **personal projects** or contribute to **open-source projects** to build your portfolio and gain real-world experience.

– Attend **coding bootcamps**, **workshops**, or **meetups** to network with other software engineers and learn from industry professionals.

– Prepare for **coding interviews** by practicing common **algorithms** and **data structures**, and be ready to demonstrate your problem-solving abilities.

– Consider pursuing a **bachelor’s degree** in computer science or a related field to deepen your understanding of software engineering concepts.

– Stay current on industry trends and best practices by reading **documentation**, following **tech blogs**, and participating in online forums.

– Be prepared to **continuously learn** and **adapt** in this fast-paced field, as technology is constantly evolving.

– Trust in your ability to learn and grow as a software engineer, and have the **confidence** to pursue your passion for coding.